Overview

Artificial intelligence (AI) bears hope for a positive future for humanity. For example, AI could help us fight climate change by optimising power usage. Like fire and electricity have fundamentally changed the life of mankind (quote), AI may also increase overall human productivity to balance out the ageing population and increase humanity’s overall welfare.

The status quo is that AI is far from being perfect. One of the greatest issues is that AI systems are not trustworthy yet. It is difficult to understand when and why they fail. This is especially so when the models are deployed in environments that are different from the training environment (even slightly). Even worse, naive or malicious application of AI systems causes harm to humanity by amplifying political polarisation, by treating minority groups unfairly, and by jeopardising the human liberty and free will.

This leads to our study of Trustworthy AI. We aim to understand the trustworthiness of current AI systems and develop new technologies that enhance their trustworthiness. We focus on three sub-topics among other important topics:

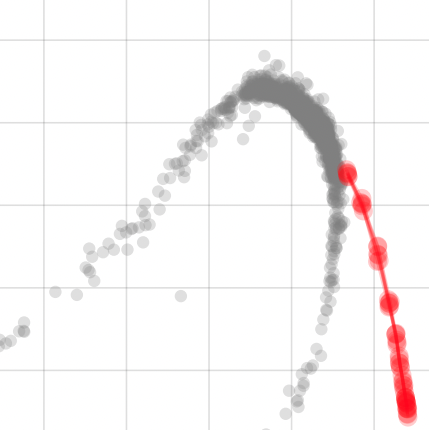

- Robustness: AI needs to generalise better to environments that are possibly different from the training environment.

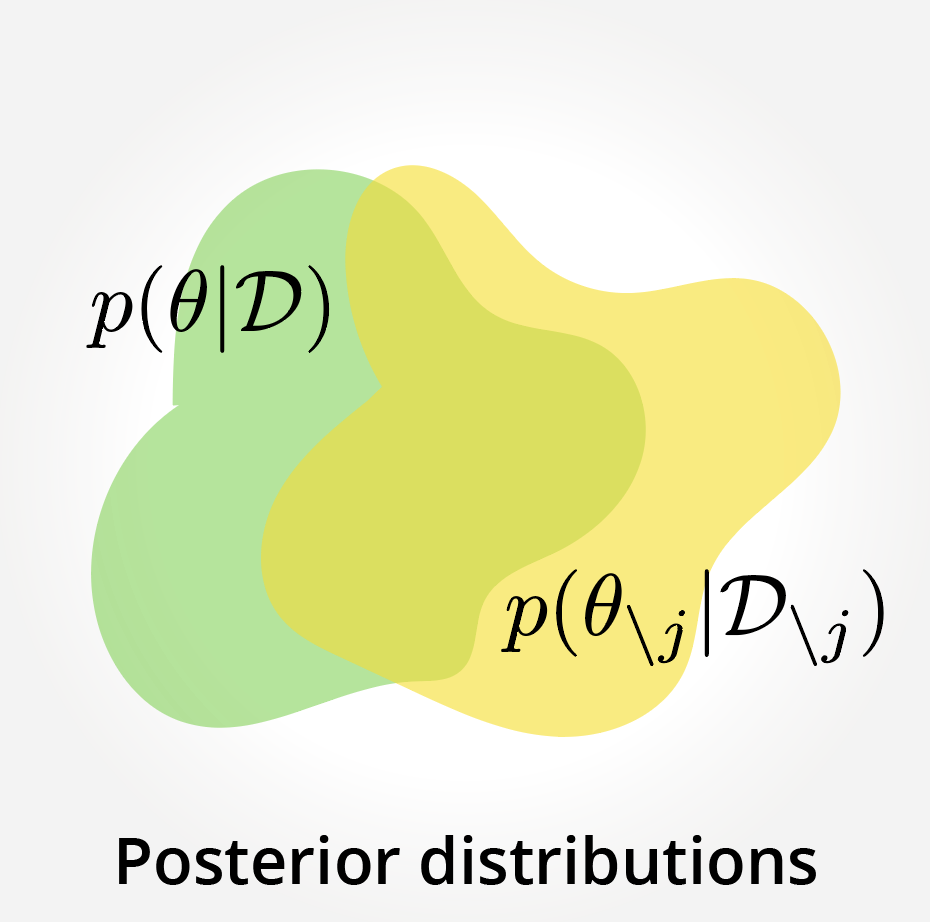

- Uncertainty: AI needs to be aware of its own lack of knowledge and capabilities and communicate that with humans.

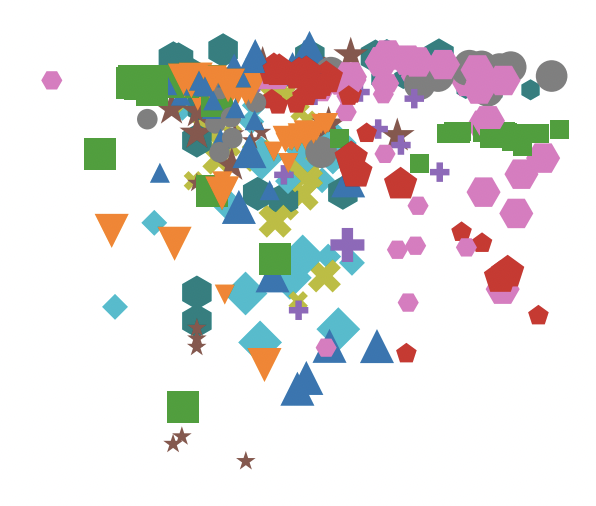

- Explainability: the mechanism behind AI’s recognition and decisions needs to be understandable to humans.

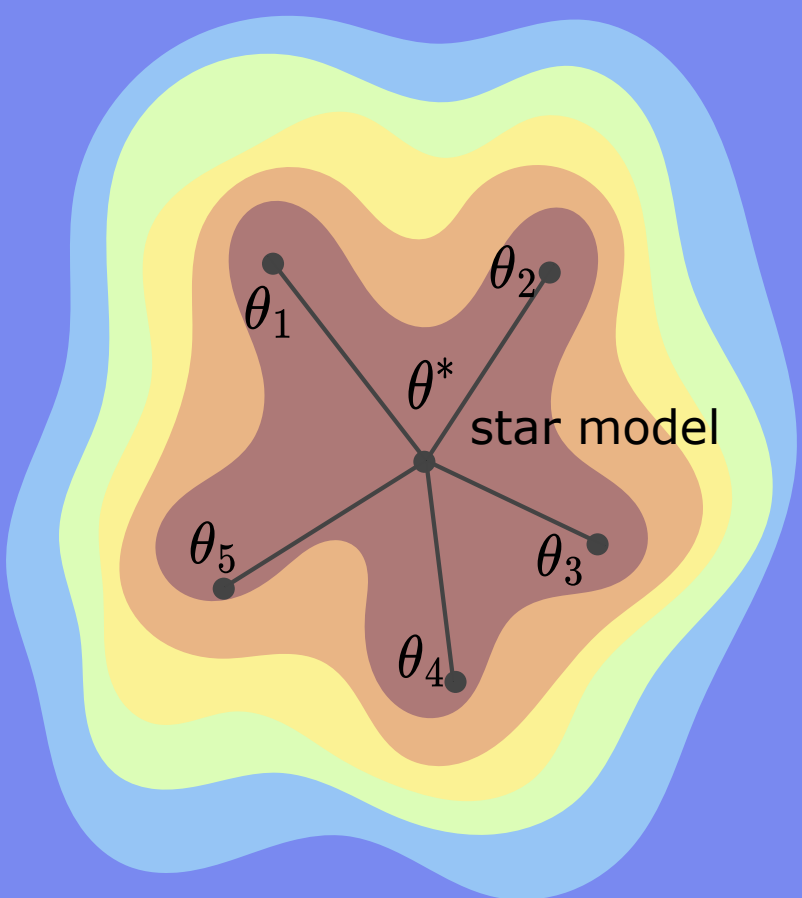

Fortunately, we are not alone in this effort. There are many other research labs around the world that make important contributions on Trustworthy AI. Our group find our uniqueness by striving for working solutions that are widely applicable and can be deployed at a large-scale. We thus name our group Scalable Trustworthy AI. To achieve the scalability, we commit ourselves to the following principles:

- Simple is better than complex. Scalability and applicability are inversely correlated with complexity.

- Understand and then solve. You can only solve a problem when you understand it.

- Do not follow a dead end. When an approach is fundamentally limited in the long run, don’t take it.

With these principles in mind, we do research on Scalable Trustworthy AI technologies to guide the field to the right direction. We hope to contribute to mitigating the negative side-effects of AI and accelerating the AI-led advances for the future of humanity.

For prospective students: You might be interested in our internal curriculum and guidelines for a PhD program: Principles for a PhD Program.

STAI group is part of the Tübingen AI Center and the University of Tübingen. STAI is also within the ecosystem of International Max Planck Research School for Intelligent Systems (IMPRS-IS) and the ELLIS Society.